Appearance

Models Template Library Reference

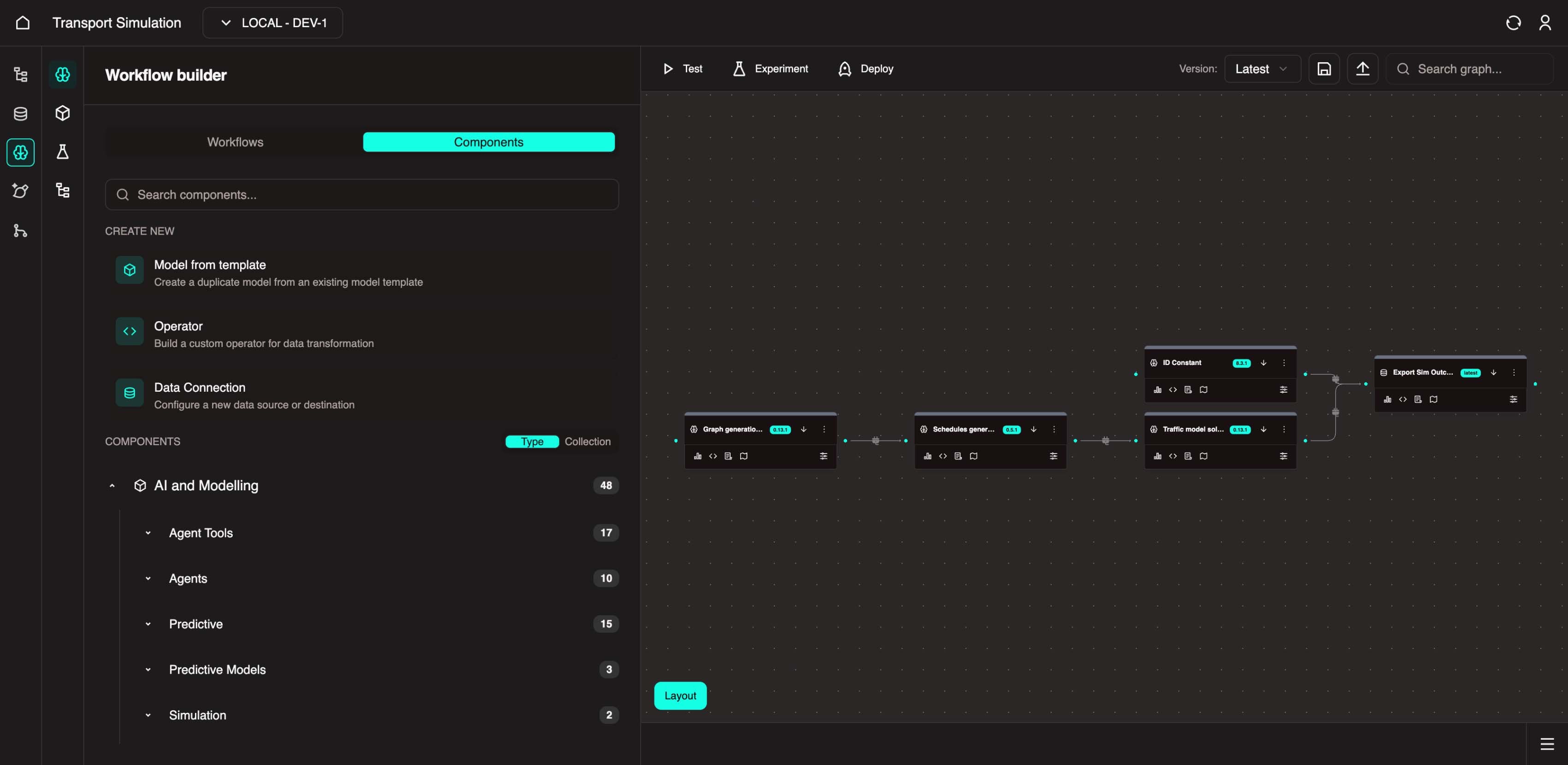

We have a library of prebuilt models to accelerate your workflow build. These components are intended to allow you to drag and drop pre-made code to customise your workflow rapidly and without extensive understanding of each component itself. You can find the library in the MDK under the 'components' tab:

What You'll Learn

- Available data loaders and ingestion components

- AI agent and MCP server tools

- Linear systems and traffic model components

- Schedule generation and timeseries analysis tools

- Statistical models and experiment management utilities

Data Loaders [OR-AST-DATA-LOADER]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Upload JSON Raw Data [Dataloading - JSON Raw Data Upload] | Upload raw JSON bytes into the DDK | As a workflow builder, I want to upload raw JSON data directly to the DDK so that I can pass dynamic data through the workflow that is regularly updated | Golang |

| Transform JSON [Dataloading - JSON Transform] | Alters and transforms JSON file | As a workflow builder, I want to transform my JSON data (edit column names, structures etc) so that I can conform the table structure as needed | Golang |

| Upload CSV String [Dataloading - CSV String Upload] | Upload single raw CSV string into the DDK | As a workflow builder, I want to upload raw CSV String data directly to the DDK so that I can pass dynamic data through the workflow without having the file saved on my local device | Golang |

| CSV Bulk Upload [Dataloading - CSV Upload Bulk] | Upload multiple CSV files to the specified destination across tables in bulk | As a workflow builder, I want to be able to upload multiple CSV files so that I can easily have all my data on the platform to perform analyses on | Golang |

| Raw SQL Query [Dataloading - Raw SQL Query] | Provides SQL queries to allow users to interrogate database | As a workflow builder, I want SQL queries readily available so that I can query my database without extensive knowledge of SQL | Golang |

| Transform CSV [Dataloading - JSON Data Upload] | Alters and transforms CSV file | As a workflow builder, I want to transform my JSON data (edit column names, structures etc) so that I can conform the table structure as needed | Golang |

| Upload JSON Data [Dataloading - JSON Data Upload] | Upload JSON files from local device to the platform | As an analyst, I want to upload my JSON file from my device to the OR platform so that I can perform the analyses I need for my work | Golang |

| Export CSV [Dataloading - CSV Export] | Export CSV data from specified tables or from all database tables to CSV format | As a data analyst/engineer, I want to be able to export the data output from the workflow so that I can run further analysis or reporting as required | Golang |

| Generate CSV Mock Data [Dataloading - CSV Mock Data Generation] | Create mock data in a table | As a workflow builder, I want to create mock data to fill a table so that I can test different use cases, workflow capabilities and connections | Golang |

| Upload CSV [Dataloading - CSV Mock Data] | Upload CSV file from local device to the platform | As an analyst, I want to upload my CSV file from my device to the OR platform so that I can use that data in my model workflow for further analysis | Golang |

Data Ingestions [OR-AST-DATA-INGESTION]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| GTFS-R to GeoJSONTrip [DataIngestion - GTFS-R to GeoJSONTrip] | Converts GTFS-R vehicle positions to GeoJSONTrip | As a data engineer for a Transport Entity, I need to convert vehicle position data to GeoJSON Trip so that it can be integrated into the model in the right format | Python |

| General API Ingestion [DataIngestion - Generic API Ingestion] | Ingests raw data from a user-specified source with optional tagging | As a model builder, I want to be able to fetch data from an external source so that I can use it with other models | Python |

| Ingest Location GTFS-R [DataIngestion - Location GTSF-R Ingestion] | Ingests raw location data from a GTFS-R source with optional tagging | As a model builder, I want to be able to fetch specifically GTFS-R data from an external source so that I can use it with other models | Python |

| Ingest Schedule GTFS-R [DataIngestion - Schedule GTFS-R Ingestion] | Ingests raw schedule data from a GTFS-R source with optional tagging | As a model builder, I want to be able to fetch specifically GTFS-R data from an external source so that I can use it with other models | Python |

AI Agents/Applications [OR-AST-MCP-SERVER]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Generate PowerPoint Slides [AI-TOOL - PowerPoint Generate Slides MCP] | Generates PowerPoint slides from available data | As a BI Analyst, I want to take the insights generated through OR and create general comms so that I can present these insights to the wider team / organisation in a digestible way | Python |

| RAG Query MCP [AI_TOOL - RAG Query MCP] | Performs a similarity search on the data to match the user's query to items within the database and provide an answer | As an analyst, I want to be able to query the available data and generate a response with no hallucinations so that I can quickly and easily draw insights from my data | Python |

| Web Scraper Search [AI_TOOL - Web Scraper Search MCP] | Searches the web, scrapes results, and provides summaries | As a market researcher, I want to be able to search the web and generate a summary so that I can quickly include a high level scan in my findings | Python |

| General Data MCP [AI_TOOL - General data MCP] | Fetches general data from an API | As a data analyst, I want to access data through an API so that I can include a wide range of data into my model / workflow | Python |

| Read File [AI_TOOL - Read File MCP] | Reads content from a file in the file system | As a data engineer, I want to easily read the contents from an uploaded file so that I don't have to manually extract the data | Python |

| Fetch GTFS Data [AI_TOOL - GTFS Data MCP] | Fetches GTFS (traffic) data from an API | As a data engineer, I want to easily fetch GTFS data that considers the complexities with this data type so that I can reduce manual work when building my models | Python |

| Write File [AI_TOOL - Write File MCP] | Writes content to a file in the file system | As a data engineer, I want an automated way to write content to a file so that I can spend more time on more complex components of the data model | Python |

| PostgreSQL Query [AI_TOOL - PostgreSQL Query MCP] | Executes a PostgreSQL query automatically | As a data engineer, I want queries to be automatically executed so that the workflow can run independently of my intervention and I can focus on more complex tasks | Python |

| Read Files from Directory [AI_TOOL - Read Files from Directory MCP] | Reads all files from a specified directory in the file system | As a model builder, I want to read all files from a specified directory in an automated way so that I don't need to spend excessive amounts of time on this task | Python |

| Single URL Web Scraper [AI_TOOL - Web Scraper Single URL MCP] | Scrapes content from a single URL and provides a summary | As an analyst, I want an easy way to create a summary from a website so that I can quickly generate insights for my work | Python |

| Get File Info [AI_TOOL - Get File Info MCP] | Retrieves information about a file in the file system | As a data analyst, I want to know the metadata of a file in the system so that I can understand the source and ensure traceability | Python |

| Send Email [AI_TOOL - Send Email MCP] | Sends an email using the configured email settings | As a project manager, I want to be able to send emails to my team with preconfigured settings so that I can distribute information with minimal human intervention | Python |

| Multiple URL Web Scraper [AI_TOOL - Web Scraper Get URLs MCP] | Gets search result URLs for a query without scraping content | As a data analyst, I want a list of URLs based on my query so that I know where I can get the right information for my research | Python |

| RAG Load Document [AI_TOOL - RAG Load Document MCP] | Loads a document or directory into the vector store | As a model builder, I want to easily load docs and entire directories into a vector store so that I can save time that would be spent loading these one by one | Python |

| Explain PostgreSQL [AI_TOOL - PostgreSQL Explain MCP] | Explains a PostgreSQL query | As an analyst, I want to know what the PostgreSQL queries are doing in my workflow so that I can better understand each task and how to use them | Python |

| Delete All Items [AI_TOOL - RAG Delete All Items MCP] | Deletes all items from the vector store | As a model builder, I want to easily delete docs or entire directories from a vector store so that I don't spend excess time manually deleting each item | Python |

AI Agent Python [OR-AST-AI-AGENT-PYTHON]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Select the next stage [AI_PLANNING - Select the next stage to execute in a plan] | Select the next stage to execute in a plan and return the next executable stage | As a model builder, I want to automatically select the next stage to be executed | Python |

| AI Agent with RAG tools [AI_TOOL - AI Agent with RAG tools] | An agent provided with RAG tools. It can query for relevant data and documents from the RAG database | As an analyst, I want to easily query data and documents so that I can gather the information I need within defined parameters | Python |

| Agentic Web scraping [AI_TOOL - AI Agent with web scraping tools] | An agent provided with web scraping tools. It can scrape web pages for relevant data and documents | As an analyst, I want to be able to search web pages using an agent so that I can gather relevant information quickly and simply | Python |

| Summarise plan and generate report [AI_PLANNING - Summarise the plan and its stages to provide a plan report] | Summarise the plan and its stages to provide a concise overview of the planning process, including if the goal was achieved | As a project manager, I want to easily summarise a project plan, including if goals were met so that I can easily and quickly distribute a plan report to the wider team | Python |

| Single LLM Call [AI_TOOL - Make a single LLM call to generate a response based on the input content] | This endpoint allows you to call single agents with the provided inputs and parameters | As an analyst, I want to be able to make a request in natural language so that I can execute a variety of LLM tasks as needed | Python |

| Perform websearch [AI_TOOL - perform websearch for information] | This endpoint allows you to call a websearch agent with specified inputs and parameters | As a BA, I want to be able to include web searches in my research so that my results are enriched with the most up-to-date information from the internet | Python |

| Review plan and provide pass criteria [AI_PLANNING - Review each stage and provide feedback and pass criteria for the next planning stage] | Review each stage in a plan and provide feedback and pass criteria for the next planning stage | As a tech BA, I want to generate logical pass criteria for each stage and gain feedback so that I can review each stage in a plan more efficiently | Python |

| Make a plan [AI_PLANNING - Make a plan to solve a problem] | Generate a plan to solve complex problems using a planning agent | As a tech BA, I want to be able to prepare a marketing strategy, including internal / web research to formulate a thorough plan so that I can efficiently execute a well informed strategy | Python |

| PowerPoint Tools [AI_TOOL - AI Agent with PowerPoint tools] | An agent provided with PowerPoint tools. It can create PowerPoint presentations based on the input content | As an Insights BA, I want to easily create powerpoint slides with an AI agent so that I can communicate insights meaningfully with relevant stakeholders | Python |

Linear Systems [OR-AST-LINEAR-SYSTEMS]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Normal Distribution Sampler [Linear Systems - Normal Distribution Sampler] | Generates random samples from a normal distribution. It is useful for simulating real-world randomness, testing models under different conditions, or generating synthetic datasets. | As a risk management firm, we want to simulate stock market returns using random samples so that we can understand financial uncertainties. | Julia |

| Second Order Oscillator ODE Solver [Linear Systems - Second Order Ocsillator ODE Solver] | This model numerically solves a second-order differential equation that describes oscillatory systems, such as spring-mass systems, pendulums, or circuit resonators. It is widely used in physics, engineering, and control system simulations. | As a mechanical engineer, I want to simulate shock absorber dynamics so that I can predict how a car's suspension responds to bumps and road irregularities. | Julia |

Traffic Model [OR-AST-TRAFFIC-MODEL]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Generate road graph (Bounding box) [Graph generation - Bounding box - Car] | Generates road graph from bounding box coordinates using LightOSM | As a Transport Operations manager, I want to see the road activity within a defined boundary so that I can assess the traffic flow conditions and deploy resources as needed | Julia |

| Generate graph using point and radius [Graph generation - Point and radius - Car] | This model constructs a road network graph from OpenStreetMap (OSM) data taking a city location name as input and enabling the analysis of road structures, intersections, and optimal routing paths. The generated graph can be used in transport modeling, navigation systems, or urban planning applications. | As a city planner, I want to generate a road graph so that I can simulate traffic flow changes after introducing new bus lanes. | Julia |

| Traffic Model Solver [Traffic Model Solver - Car] | This model implements the traffic simulations based on the given road graph and vehicles schedules. The output can be analysed to assess vehicle delays, estimate emissions, and optimise scheduling for improved efficiency. | As a logistics company, we want to run multiple simulations in parallel to identify the most fuel-efficient delivery routes so that we can reduce travel distance while maximising on-time deliveries. | Julia |

| Calculate Shortest Path - Car [Shortest Path - Car] | Calculate the shortest path between origin and destination coordinates within a provided road graph using LightOSM | As a traffic controller, I want to know the shortest path between origin and destination, how long the journey will be and the distance covered so that I can make an informed decision on alternative routes for customers. | Julia |

| GeoJSON Shortest Car Path [Shortest Path - GeoJSON LineString - Car] | Calculate the shortest path between coordinates and return only the GeoJSON Feature Collection Line String | As a traffic controller, I want to view the shortest path between origin and destination on the map so that I can determine the quickest route for customers | Julia |

| Generate road graph (Place name) [Graph generation - Place name - Car] | Generate road graph using LightOSM | As an analyst in a Transport Agency, I want to visualise an up to date road graph so that I can develop accurate insights for the problem I am trying to solve. | Julia |

Schedule Generation [OR-AST-SCHEDULE-GENERATION]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Generate Schedule [Schedule Generation - Car] | This component creates a structured time-based schedule for vehicles in a transportation simulation. It defines vehicles' routes and departure times, enabling realistic scheduling for route planning. | As a public transit agency, we want to simulate bus routes under different conditions so that we can test that our buses run in line with a new or current timetable | Julia |

Timeseries [OR-AST-TINY-TIME-MIXERS]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Convert to visualisable format [Timeseries convert to visualisable format - Statistics] | This is a supplement component that converts the dataframe into MDK visualisable format | - | Python |

| Drop time column | This is a supplement component that drops a specific column from the dataframe | - | - |

| Timeseries prediction | Main component that performs time-series prediction based on Tiny Time Mixers library. | As an emergency management agency, we want to be able to predict temperatures over the next couple of weeks so we can decide when to best execute preventative fire activities. | - |

Statistics [OR-AST-STATS-MODELS]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Timeseries filtfilt function for dataframe [Timeseries filtfilt function for dataframe - statistics] | The Filtfilt function applies a zero-phase filtering technique to smooth time-series data without introducing phase shifts. Unlike traditional filters that can distort the timing of signals, Filfilt processes the data both forward and backward, preserving the original alignment of peaks and troughs. This makes it ideal for applications requiring precise signal timing. | As a biomedical researcher, I want to filter ECG heart rate signals to remove high-frequency noise while preserving accurate wave patterns so that I can maintain the same levels of accuracy for analysis | Julia |

| Timeseries triple exponential smoothing for dataframe [Timeseries triple exponential smoothing for dataframe - Statistics] | Triple exponential smoothing builds on the double exponential method by incorporating a seasonality component, making it suitable for data with repeating cyclical patterns. The model accounts for trend, seasonality, and random variations, allowing for robust long-term forecasting. | As an energy provider, I want to incorporate daily usage cycles and seasonal fluctuations so that I can predict electricity consumption patterns accurately | Julia |

| Timeseries exponential smoothing for dataframe [Timeseries exponential smoothing for dataframe - Statistics] | Exponential smoothing is a forecasting method that assigns exponentially decreasing weights to past observations, giving more importance to recent values. Unlike a simple moving average, it adapts to changes more quickly, making it useful for forecasting data with no clear seasonality or trend shifts. | As a ride-sharing data scientist, I want to give higher priority on recent bookings over older data so that I can better predict future demand | Julia |

| Timeseries double exponential smoothing for dataframe [Timeseries double exponential smoothing - Statistics] | Double exponential smoothing (Holt's Method) extends exponential smoothing by introducing a trend component, making it ideal for datasets with a consistent upward or downward trajectory. The model calculates both smoothed values and a trend estimate, adjusting forecasts accordingly. | As a retailer, I want to capture both short term fluctuations and long term business expansion trends so that I can properly forecast quarterly revenue growth | Julia |

| Timeseries exponential smoothing [Tmeseries exponential smoothing - Statistics] | Exponential smoothing is a forecasting method that assigns exponentially decreasing weights to past observations, giving more importance to recent values. Unlike a simple moving average, it adapts to changes more quickly, making it useful for forecasting data with no clear seasonality or trend shifts. | As a ride-sharing data scientist, I want to give higher priority on recent bookings over older data so that I can better predict future demand | Julia |

| Timeseries triple exponential smoothing [Timeseries triple exponential smoothing - Statistics] | Triple exponential smoothing builds on the double exponential method by incorporating a seasonality component, making it suitable for data with repeating cyclical patterns. The model accounts for trend, seasonality, and random variations, allowing for robust long-term forecasting. | As an energy provider, I want to incorporate daily usage cycles and seasonal fluctuations so that I can predict electricity consumption patterns accurately | Julia |

| Simple Statistics [Simple Stats - Statistics] | Performs fundamental statistical calculations on a dataset, including mean, median, mode, variance, standard deviation, and percentiles. These measures help summarise key properties of the data, allowing users to understand central tendencies and variability. This is particularly useful for preliminary data analysis before applying more advanced models. | As a climate scientist I want to calculate the average temperature for a region over a given period so that I can provide baseline insights before applying predictive models | Julia |

| Timeseries Auto-regressive modelling [Timeseries modelling with auto-regression - Statistics] | Auto-regressive (AR) models assume that the current value in a time series is a weighted sum of past values plus some error term. The number of past values (or "lags") used in the model is determined based on statistical tests or domain knowledge. AR models work well when data points have a strong correlation with their preceding values. | As a telecommunications company, we want to model how previous usage spikes influence future demand for bandwidth so that we can forecast network congestion | Julia |

| Timeseries filtfilt function [Timeseries filtfilt function - statistics] | The Filfilt function applies a zero-phase filtering technique to smooth time-series data without introducing phase shifts. Unlike traditional filters that can distort the timing of signals, Filfilt processes the data both forward and backward, preserving the original alignment of peaks and troughs. This makes it ideal for applications requiring precise signal timing. | As a biomedical researcher, I want to filter ECG heart rate signals to remove high-frequency noise while preserving accurate wave patterns so that I can maintain the same levels of accuracy for analysis | Julia |

| Timeseries moving average for dataframe [Timeseries moving average for dataframe - Statistics] | A moving average smooths fluctuations in time-series data by computing the average of a rolling window of previous observations. This method is useful for removing short-term noise and revealing underlying trends. A shorter window size makes the moving average more responsive to recent changes, while a longer window produces a smoother curve. | As a financial analyst, I want to be able to apply a 30-day moving average to historical stock prices so that I can identify long term price trends while ignoring daily market volatility | Julia |

| Timeseries moving average [Timeseries moving average - Statistics] | A moving average smooths fluctuations in time-series data by computing the average of a rolling window of previous observations. This method is useful for removing short-term noise and revealing underlying trends. A shorter window size makes the moving average more responsive to recent changes, while a longer window produces a smoother curve. | As a financial analyst, I want to be able to apply a 30-day moving average to historical stock prices so that I can identify long term price trends while ignoring daily market volatility | Julia |

| Timeseries double exponential smoothing [Timeseries double exponential smoothing - Statistics] | Double exponential smoothing (Holt's Method) extends exponential smoothing by introducing a trend component, making it ideal for datasets with a consistent upward or downward trajectory. The model calculates both smoothed values and a trend estimate, adjusting forecasts accordingly. | As a retailer, I want to capture both short term fluctuations and long term business expansion trends so that I can properly forecast quarterly revenue growth | Julia |

| Timeseries Auto-regressive modelling for dataframe [Timeseries modelling with auto-regression for dataframe - Statistics] | Auto-regressive (AR) models assume that the current value in a time series is a weighted sum of past values plus some error term. The number of past values (or "lags") used in the model is determined based on statistical tests or domain knowledge. AR models work well when data points have a strong correlation with their preceding values. | As a telecommunications company, we want to model how previous usage spikes influence future demand for bandwidth so that we can forecast network congestion | Julia |

| Timeseries SARIMA [Timeseries SARIMA - Statistics] | SARIMA is an advanced time-series forecasting model that combines autoregression (AR), differencing (I), and moving averages (MA), while also accounting for seasonal effects. It is particularly effective for data with predictable seasonal trends that recur at fixed intervals. | A tourism board predicts monthly visitor arrivals, considering seasonal travel patterns influenced by holidays, school vacations, and weather conditions. | Julia |

| Timeseries polynomial regression | Polynomial regression extends linear regression by fitting a curve to the data instead of a straight line. This is done by introducing higher-degree polynomial terms, allowing the model to capture nonlinear trends and fluctuations over time. It is useful for time-series datasets where relationships between variables change over time. | As a tech company, we want to capture how interaction levels shift in different scenarios so that we can analyse user engagement trends in response to feature updates and marketing campaigns. | Julia |

MDK Experiment Manager [MDK-EXPERIMENT-MANAGER]

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Trigger Workflow [Trigger - Workflow] | Asynchronously trigger a workflow execution | - | Golang |

| Trigger multiple Workflows [Multiple Trigger - Workflow] | Asynchronously trigger multiple workflow executions | - | Golang |

| Generate string data [Data constant - String] | Provides constant string data values | - | Golang |

| Generate boolean data [Data constant - boolean] | Provides constant boolean data values | - | Golang |

| Generate float data [Data constant - float] | Provides constant float data values | - | Golang |

| Generate integer data [Data constant - integer] | Provides constant integer data values | - | Golang |

| Generate array data [Data constant - array] | Provides constant integer data values | - | Golang |

| Generate object data [Data constant - object] | Provides constant object data values | - | Golang |

| Redis Read - String | Reads string values from Redis by key | - | Golang |

| Redis Read - Object | Reads object/dict values from Redis by key | - | Golang |

| Redis Read - List String | Reads string values from Redis for multiple keys | - | Golang |

| Redis Read - List Object | Reads object/dict values from Redis for multiple keys | - | Golang |

Operator

| Component [Component Name in SDK] | Description | Use Case | Language |

|---|---|---|---|

| Extract Stage Key Prefix | Extracts the stage key prefix from the input | - | - |

| Feedback Loop | Combines stage summary and prompt into planner | - | - |

| Merge stage and task outputs | Merge outputs from the AI tasks and selected stage | - | - |